Gradient descent in $n$-dimensional space in the context of an RBF network

I am trying to implement an algorithm to perform gradient descent in a $n$-dimensional space in the context of an RBF network. My network has 5 inputs and 1 output. It has the following Gaussian radial basis function: $$f(x_i)=\sum_{k=1}^nw_ke^{(-\lambda_k||x_i - \mu_k||^2)}$$

where:

- $n$ is the number of centroids in the network

- $w_k$ are the weights between the hidden layer and output layer for each centroid $n$

- $\lambda_k$ are the function width parameters for the $k^{th}$ centroid

- $x_i$ is the $i^{th}$ training data input (vector)

- $\mu_k$ is the $k^{th}$ centroid (vector)

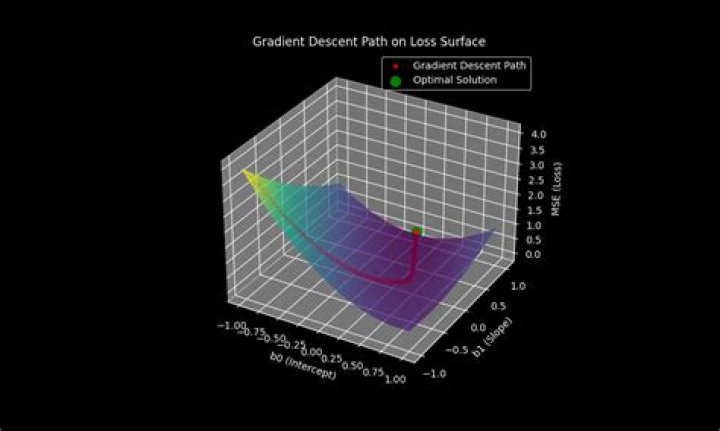

My goal is the find optimal values of $w_k$ and $\lambda_k$ that minimize the squared error. I plan on doing that iteratively by:

- Fix $\lambda_k$ and use pseudo-inverse to solve for $w_k$

- Fix $w_k$ and solve for $\lambda_k$ using gradient descent by minimizing the error w.r.t. $\lambda_k$

- Repeat steps 1 and 2 until convergence is achieved (or any other criteria).

I have already found the weights by fixing each $\lambda_k$ to 1 (randomly) and using the pseudo-inverse matrix. Now I need to find values for each $\lambda_k$ that minimize the squared error. From what I understand, I need to:

- Find the partial(w.r.t. $\lambda_k$) derivative of the error function: $$S=\sum_{i=1}^p(y_i - f(x_i))^2$$where $f(x_i)$ is the function above, $y_i$ is the actual output from the $i^{th}$ training data and $p$ is the number of entries in the training set.

- Equate that partial derivative to zero.

- Solve for all the $\lambda_k$.

I do not understand well how to perform gradient descent w.r.t. $\lambda_k$.

Can anyone help me clarify how to perform gradient descent in a $n$-dimensional space? I need to find a vector of gradients $\delta_k$ to apply to each $\lambda_k$, so that $\lambda_k := \lambda_k + \delta_k$

My current algorithm for one iteration of gradient descent:

for each item x in training data set feed forward x in the network and get the output for each centroid k λk = λk - μ*(gradient of the cost function) // μ is a constant for the learning rate end loop.

end loop.where:

$\frac{\delta S}{\delta\lambda_k} = \sum_{i=1}^n2d_i^2(y_i - e^{-d_i^2\lambda_k})e^{-d_i^2\lambda_k} = \sum_{i=1}^n2d_i(y_i-f(x_i))f(x_i)$

where $d_i = ||x_i - \mu_k||$ = euclidean distance and $y_i$ = output from the training set, both known values.

Thank you very much!

$\endgroup$ 15 Reset to default