How is PNG lossless given that it has a compression parameter?

PNG files are said to use lossless compression. However, whenever I am in an image editor, such as GIMP and try to save an image as a PNG file, it asks for the compression parameter, which ranges between 0 and 9. If it has a compression parameter that affects the visual precision of the compressed image, how does it make PNG lossless?

Do I get lossless behavior only when I set the compression parameter to 9?

107 Answers

PNG is lossless. GIMP is most likely just not using the best word in this case. Think of it as "quality of compression", or in other words, "level of compression". With lower compression, you get a bigger file, but it takes less time to produce, whereas with higher compression, you get a smaller file that takes longer to produce. Typically you get diminishing returns (i.e., not as much decrease in size compared to the increase in time it takes) when going up to the highest compression levels, but it's up to you.

10PNG is compressed, but lossless

The compression level is a tradeoff between file size and encoding/decoding speed. To overly generalize, even non-image formats, such as FLAC, have similar concepts.

Different compression levels, same decoded output

Although the file sizes are different, due to the different compression levels, the actual decoded output will be identical.

You can compare the MD5 hashes of the decoded outputs with ffmpeg using the MD5 muxer.

This is best shown with some examples:

Create PNG files:

$ ffmpeg -i input -vframes 1 -compression_level 0 0.png

$ ffmpeg -i input -vframes 1 -compression_level 100 100.png- By default

ffmpegwill use-compression_level 100for PNG output.

Compare file size:

$ du -h *.png 228K 0.png 4.0K 100.pngDecode the PNG files and show MD5 hashes:

$ ffmpeg -loglevel error -i 0.png -f md5 -

3d3fbccf770a51f9d81725d4e0539f83

$ ffmpeg -loglevel error -i 100.png -f md5 -

3d3fbccf770a51f9d81725d4e0539f83Since both hashes are the same you can be assured that the decoded outputs (the uncompressed, raw video) are exactly the same.

6PNG compression happens in two stages.

- Pre-compression re-arranges the image data so that it will be more compressible by a general purpose compression algorithm.

- The actual compression is done by DEFLATE, which searches for, and eliminates duplicate byte-sequences by replacing them with short tokens.

Since step 2 is a very time/resource intensive task, the underlying zlib library (encapsulation of raw DEFLATE) takes a compression parameter ranging from 1 = Fastest compression, 9 = Best compression, 0 = No compression. That's where the 0-9 range comes from, and GIMP simply passes that parameter down to zlib. Observe that at level 0 your png will actually be slightly larger than the equivalent bitmap.

However, level 9 is only the "best" that zlib will attempt, and is still very much a compromise solution.

To really get a feel for this, if you're willing to spend 1000x more processing power on an exhaustive search, you can gain 3-8% higher data density using zopfli instead of zlib.

The compression is still lossless, it's just a more optimal DEFLATE representation of the data. This approaches the limits of a zlib-compatible libraries, and therefore is the true "best" compression that it's possible to achieve using PNG.

A primary motivation for the PNG format was to create a replacement for GIF that was not only free but also an improvement over it in essentially all respects. As a result, PNG compression is completely lossless - that is, the original image data can be reconstructed exactly, bit for bit - just as in GIF and most forms of TIFF.

PNG uses a 2-stage compression process:

- Pre-compression: filtering (prediction)

- Compression: DEFLATE (see wikipedia)

The precompression step is called filtering, which is a method of reversibly transforming the image data so that the main compression engine can operate more efficiently.

As a simple example, consider a sequence of bytes increasing uniformly from 1 to 255:

1, 2, 3, 4, 5, .... 255Since there is no repetition in the sequence, it compresses either very poorly or not at all. But a trivial modification of the sequence - namely, leaving the first byte alone but replacing each subsequent byte by the difference between it and its predecessor - transforms the sequence into an extremely compressible set :

1, 1, 1, 1, 1, .... 1The above transformation is lossless, since no bytes were omitted, and is entirely reversible. The compressed size of this series will be much reduced, but the original series can still be perfectly reconstituted.

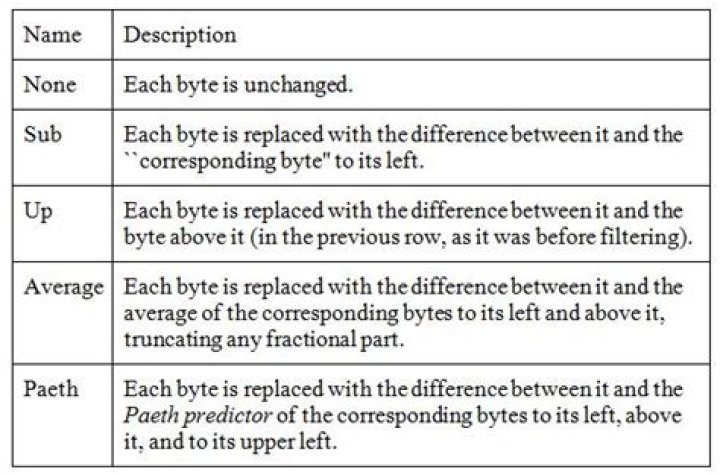

Actual image-data is rarely that perfect, but filtering does improve compression in grayscale and truecolor images, and it can help on some palette images as well. PNG supports five types of filters, and an encoder may choose to use a different filter for each row of pixels in the image :

The algorithm works on bytes, but for large pixels (e.g., 24-bit RGB or 64-bit RGBA) only corresponding bytes are compared, meaning the red components of the pixel-colors are handled separately from the green and blue pixel-components.

To choose the best filter for each row, an encoder would need to test all possible combinations. This is clearly impossible, as even a 20-row image would require testing over 95 trillion combinations, where "testing" would involve filtering and compressing the entire image.

Compression levels are normally defined as numbers between 0 (none) and 9 (best). These refer to tradeoffs between speed and size, and relate to how many combinations of row-filters are to be tried. There are no standards as regarding these compression levels, so every image-editor may have its own algorithms as to how many filters to try when optimizing the image-size.

Compression level 0 means that filters are not used at all, which is fast but wasteful. Higher levels mean that more and more combinations are tried on image-rows and only the best ones are retained.

I would guess that the simplest approach to the best compression is to incrementally test-compress each row with each filter, save the smallest result, and repeat for the next row. This amounts to filtering and compressing the entire image five times, which may be a reasonable trade-off for an image that will be transmitted and decoded many times. Lower compression values will do less, at the discretion of the tool's developer.

In addition to filters, the compression level might also affect the zlib compression level which is a number between 0 (no Deflate) and 9 (maximum Deflate). How the specified 0-9 levels affect the usage of filters, which are the main optimization feature of PNG, is still dependent on the tool's developer.

The conclusion is that PNG has a compression parameter that can reduce the file-size very significantly, all without the loss of even a single pixel.

Sources:

Wikipedia Portable Network Graphics

libpng documentation Chapter 9 - Compression and Filtering

OK, I am too late for the bounty, but here is my answer anyway.

PNG is always lossless. It uses Deflate/Inflate algorithm, similar to those used in zip programs.

Deflate algorithm searches repeated sequences of bytes and replaces those with tags. The compression level setting specifies how much effort the program uses to find the optimal combination of byte sequences, and how much memory is reserved for that. It is compromise between time and memory usage vs. compressed file size. However, modern computers are so fast and have enough memory so that there is rarely need to use other than the highest compression setting.

Many PNG implementations use zlib library for compression. Zlib has nine compression levels, 1-9. I don't know the internals of Gimp, but since it has compression level settings 0-9 (0 = no compression), I would assume this setting simply selects the compression level of zlib.

Deflate algorithm is a general purpose compression algorithm, it has not been designed for compressing pictures. Unlike most other lossless image file formats, PNG format is not limited to that. PNG compression takes advantage of the knowledge that we are compressing a 2D image. This is achieved by so called filters.

(Filter is actually a bit misleading term here. It does not actually change the image contents, it just codes it differently. More accurate name would be delta encoder.)

PNG specification specifies 5 different filters (including 0 = none). The filter replaces absolute pixel values with difference from previous pixel to the left, up, diagonal or combination of those. This may significantly improve the compression ratio. Each scan line on the image can use different filter. The encoder can optimize the compression by choosing the best filter for each line.

For details of PNG file format, see PNG Specification.

Since there are virtually infinite number of combinations, it is not possible to try them all. Therefore, different kind of strategies have been developed for finding an effective combination. Most image editors probably do not even try to optimize the filters line by line but instead just used fixed filter (most likely Paeth).

A command line program pngcrush tries several strategies to find the best result. It can significantly reduce the size of PNG file created by other programs, but it may take quite a bit of time on larger images. See Source Forge - pngcrush.

Compression level in lossless stuff is always just trading encode resources (usually time, sometimes also RAM) vs. bitrate. Quality is always 100%.

Of course, lossless compressors can NEVER guarantee any actual compression. Random data is incompressible, there's no pattern to find and no similarity. Shannon information theory and all that. The whole point of lossless data compression is that humans usually work with highly non-random data, but for transmission and storage, we can compress it down into as few bits as possible. Hopefully down to as close as possible to the Kolmogorov complexity of the original.

Whether it's zip or 7z generic data, png images, flac audio, or h.264 (in lossless mode) video, it's the same thing. With some compression algorithms, like lzma (7zip) and bzip2, cranking up the compression setting will increase the DECODER's CPU time (bzip2) or more often just the amount of RAM needed (lzma and bzip2, and h.264 with more reference frames). Often the decoder has to save more decoded output in RAM because decoding the next byte could refer to a byte decoded many megabytes ago (e.g. a video frame that is most similar to one from half a second ago would get encoded with references to 12 frames back). Same thing with bzip2 and choosing a large block size, but that also decompresses slower. lzma has a variable size dictionary, and you could make files that would require 1.5GB of RAM to decode.

2Firstly, PNG is always lossless. The apparent paradox is due to the fact that there are two different kinds of compression possible (for any kind of data): lossy and lossless.

Lossless compression squeezes down the data (i.e. file size) using various tricks, keeping everything and without making any approximation. As a result, it is possible that lossless compression will not actually be able compress things at all. (Technically data with high entropy can be very hard or even impossible to compress for lossless methods.) Lossy compression approximates the real data, but the approximation is imperfect, but this "throwing away" of precision allows typically better compression.

Here is a trivial example of lossless compression: if you have an image made of 1,000 black pixels, instead of storing the value for black 1,000 times, you can store a count (1000) and a value (black) thus compressing a 1000 pixel "image" into just two numbers. (This is a crude form of a lossless compression method called run-length encoding).

More in general

"Zoraya ter Beek, age 29, just died by assisted suicide in the Netherlands. She was physically healthy, but psychologically depressed. It's an abomination that an entire society would actively facilitate, even encourage, someone ending their own life because they had no hope. Th…"